83

October 2016

ARTICLES

The results of the Impala project (Impact Analysis of External Quality Assurance)

Anna Prades - Project manager

The IMPALA project, which was launched in 2013, came to an end in September 2016 with the organisation of different activities aimed at dissemination of the project in the participating countries. The context for dissemination by AQU Catalunya was the Workshop titled External quality assurance: what is its purpose?

It is now time to take stock of the project and what has been achieved.

What is the purpose of analysing the impact of external quality assurance?

External quality assurance in the European Union has been developed as a mechanism to promote trust in the quality of higher education institutions and thereby improve the mobility of students, teaching staff and professionals (AQU, 2003). The Berlin Communiqué underpinned the fact that quality assurance (QA) agencies are at the heart of the European Higher Education Area.

Thirty years on from the launching of the experimental project on institutional review in 1993, we now need to inquire about the performance of a series of procedures, the context of which was originally sporadic but then turned into a “flurry of QA activity that has led to the setting up of multiple organic structures in different countries” (Rodríguez, 2013: 21).

The IMPALA project was also circumscribed by the trend towards evidence-based policies, the purpose of which is not only to satisfy demands for accountability in the achievement of the benefits that policies promote, but also to help in explaining the keys to the success – or failure – of policies, and more particularly of QA policies. So it all really boils down to just asking what change is there when an external QA review is carried out.

Objectives

Centrepiece of the IMPALA project is the establishment of a common flexible methodology to evaluate the impact of different external QA procedures. Analysis of this impact should also provide a better understanding of the functioning of external QA procedures, with this knowledge ultimately contributing to the establishment of new evidence-based policies for quality assurance.

Methodology

The project is based on a before-after comparison approach, i.e. the data obtained prior to an external review are compared with those obtained six months afterwards. The objective of this approach is to use the pre-external review data in a counterfactual analysis, i.e. the pre-external review data outline how the situation would be without an external review, from which it can be inferred that any possible changes are in effect the consequence of external review.

The theoretical framework takes in theories on the causal mechanisms of social change, with the aim being to explain the aspects of internal and external quality assurance that underlie these changes (Leiber et al, 2015).

Partners

The core project was implemented by four European quality assurance agencies together with four higher education institutions from Finland, Germany, Romania and Spain.

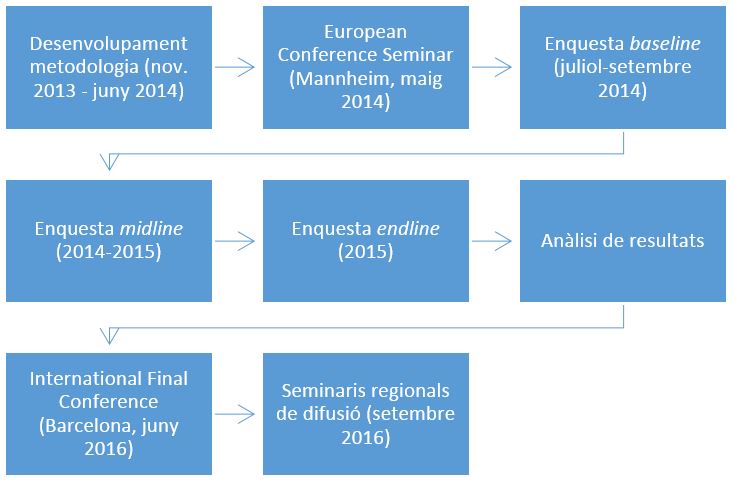

The initial stage of the IMPALA project dealt with the design of the impact evaluation questionnaire, which culminated in the Conference Seminar, Impact Analysis of External Quality Assurance in Higher Education Institutions: Methodology and Its Relevance for Higher Education Policy, held in Mannheim (May 2014).

Table 1. Stages of the IMPALA project

The methodology was then implemented in the form of three surveys, one prior to the external review (baseline), one during the site visit (midline) and a final survey six months after the external review (endline).

The results and findings of these surveys carried out in four countries were presented at the International Final Conference in Barcelona on 16 and 17 June 2016.

Results of the Impala project

Aspects that had changed and others that had not changed were identified in the four participating countries. Aside from the specific results, which are available on the project website, a summary is given here of the main implications of the project for the methodology used in Catalonia.

In order to evaluate the change in formative methodologies, analysis of the impact of other QA processes is necessary

The results and findings in Catalonia show that programme accreditation has not led to changes in teaching methodologies (for example, fewer master classes).

The explanation for there being no changes lies in the role played (as well as not played) by accreditation in the VSMA framework (link). The methodology is designed in the validation stage (ex-ante accreditation), and the functioning of the methodology (results, satisfaction, etc.) is analysed periodically as part of programme monitoring and on the basis of which any resulting modifications are put forward. The aim of accreditation as a QA procedure is not for changes to be made to programmes and it is in fact incompatible in time with any modification procedure. Moreover, any modification during the process of accreditation would appear to be inconsistent with the correct running of a programme of study (if everything is working well, why change it?). In this regard, accreditation is not a good instrument for assessing change.

Accreditation does however have a very important impact, in particular because the legal outcome either gives license to a programme being evaluated to continue to run or, in the case where it is unsuccessful, leads to the programme being withdrawn.

Accreditation serves to raise awareness of the different instruments used to monitor and enhance programmes of study

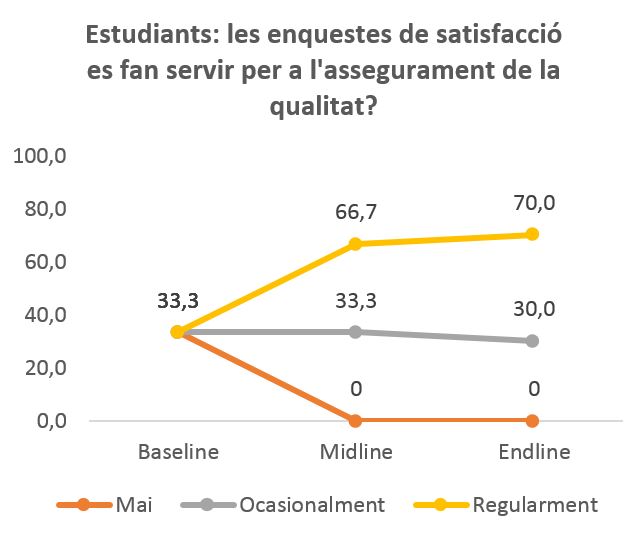

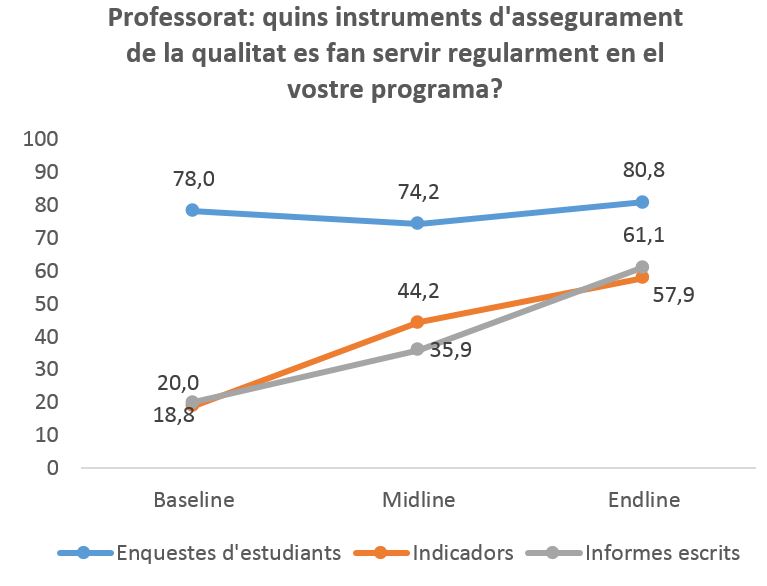

The results show how students have become aware that surveys are used for programme monitoring. Teaching staff, on the other hand, while being familiar with the way in which this instrument is used, were not so aware of the use of indicators and written reports as mechanisms for the follow-up and monitoring of programme quality.

Figure 1. Students

*Never - Occasionally - Regularly

Figure 2. Teaching staff

* Student surveys - Indicators - Written reports

The more involved teaching staff become in internal quality assurance, the better their attitude to QA procedures in general

Changes in the attitudes of teaching staff to external quality assurance took place during the process of writing up the self-evaluation report, with changes being more pronounced among those who had taken part in a Study Programme Committee. These findings are consistent with the results of other research carried out on universities in Catalonia that have shown that the participation of teaching staff in QA procedures mitigates the initial scepticism to external quality assurance (Trullen and Rodríguez, 2013).

Prospects for the future (or the impact of the impact)

What are the implications of these findings and the project for AQU Catalunya’s activities in the future?

Since its inception the Agency has understood the importance of accountability in its activities. In 2003, for example, it organised the “Taking our own medicine: How to evaluate quality assurance agencies” seminar. In order to increase our efficacy, not only do we need a measure of this (our efficacy), but we also need to know and understand what works and what doesn’t. Impact studies provide us with a greater understanding of this and thereby enable us to design more effective and efficient procedures.

Impact analysis represents a considerable change in methodology compared to the ways in which we have assessed and evaluated the functioning of our QA procedures until now. As with almost all QA agencies in Europe, these have been aimed at process improvement and enhancement and have been undertaken at the end of the external review process (Kajaste et al, 2015).

Participation in this project has enabled us to realise and understand the usefulness and value of introducing results-based quality assurance, together with the viability, in terms of the cost, of a before-after design. Impact analysis does however require a design for QA procedures that clearly sets out the purpose and objectives of the impact evaluation. To sum up, our participation in this project has enabled us to reflect not only on the ways in which quality assurance is implemented, but also on the design of QA procedures.

References

AQU Catalunya (2003). Marc general per a la integració Europea. Barcelona: AQU Catalunya. Available at: http://www.aqu.cat/doc/doc_20197380_1.pdf

IMPALA (2013-16). The project website: http://www.impala-qa.eu/impala/

Kajaste, M., Prades, A., & Scheuthle, H. (2015). Impact evaluation from quality assurance agencies’ perspectives?: methodological approaches, experiences and expectations. Quality in Higher Education, 21(3), 270–287.

Rodríguez, Sebastián (2013). Panorama internacional de la evaluación de la calidad en la educación superior. Madrid: Ed. Síntesis.

Trullen, J, & Rodríguez, S. (2013). Faculty perceptions of instrumental and improvement reasons behind quality assessment in higher education: the roles of participation and identification. Studies in Higher Education, 39(5), pp. 678-692.